Llamafile is a standalone binary that can be effortlessly downloaded and executed to initiate a robust local Large Language Model (LLM) instance. Compatible with all major operating systems, it excels not only in text processing but also enables users to upload images and pose questions about them. Additionally, its API adheres to the OpenAI API standards, allowing seamless integration with the ‘openai’ Python package for programmatically generating output from Llamafile, eliminating the need for an API key.

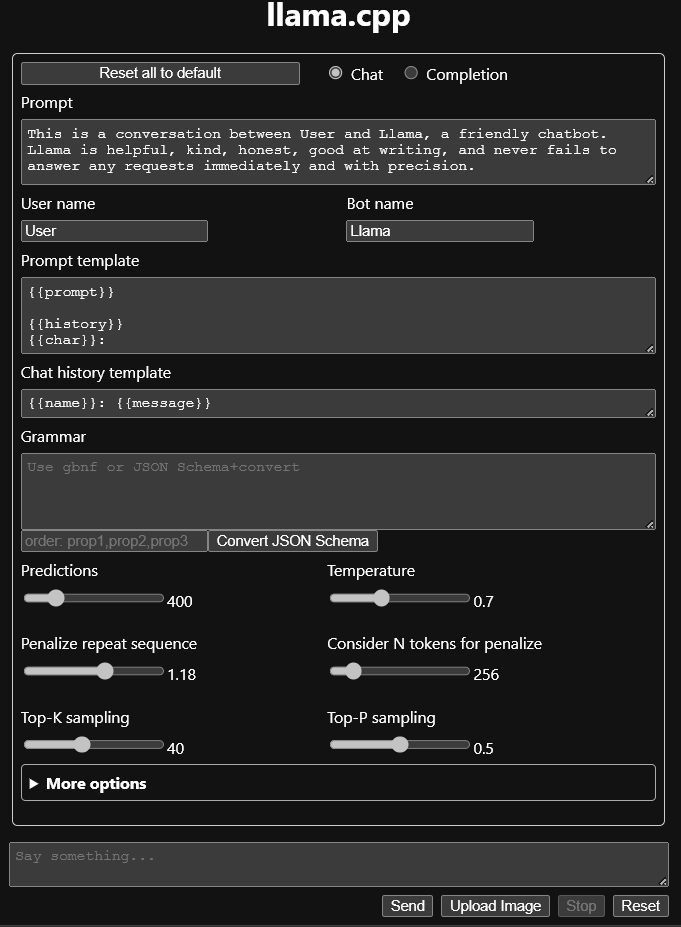

Llamafile works very well on Linux. I had some issues when I ran it on windows. Since windows does not support executables over 4GB, you have to download the software and weights separately as mentioned here. It starts a server by default on port 8080 with basic web interface which looks like this:

Given below is some python code using OpenAI package to generate text programmatically using Llamafile

#!/usr/bin/env python3 |

The above program generates the following output:

{ |

I have tested the 4GB Llamafile version on CPU and I must say that it is pretty impressive considering it’s small size, low memory requirements.

Another great feature of Llamafile is the ability to upload images and ask questions about them. I wrote a small program that takes a image path as

parameter and outputs what the model thinks it contains:

import sys |

I must say, I am impressed with Llamafile’s image inference capabilities. A couple of sample outputs from the program:

Input image 1

The image features an overturned tree log in a forest setting, with some roots exposed and rocks nearby. The fallen trunk seems to be partially covered by the surrounding environment, making it appear like it might have been cut or damaged. Additionally, there is another log laying on its side near the main log, contributing to the scene’s natural ambiance.

Input image 2

This scene features a large stone building with arches, statues, and columns adorned around it. There is also a large fountain in the courtyard of the building. The architecture suggests that this building may be a castle or historical site, featuring elaborate stonework and decorative elements like carved faces on pillars and statues surrounding the fountain. The presence of birds flying nearby adds a lively atmosphere to the scene. Overall, it appears to be a well-preserved architectural masterpiece that captures the viewer’s attention with its intricate details and design elements.

As time has progressed, there has been a proliferation of Large Language Models (LLMs). However, what disappoints me is the limited support for running these models on consumer-grade hardware. I believe Llamafile is a step in the right direction. I am optimistic that we will see similar developments for text-to-image generative models in the near future.